ElasticSearch必备知识:从索引别名、分词器、文档管理、路由到搜索详解(上)

导读:之前我们分享了ElasticSearch最全详细使用教程:入门、索引管理、映射详解,本文详细介绍ElasticSearch的索引别名、分词器、文档管理、路由、搜索详解。

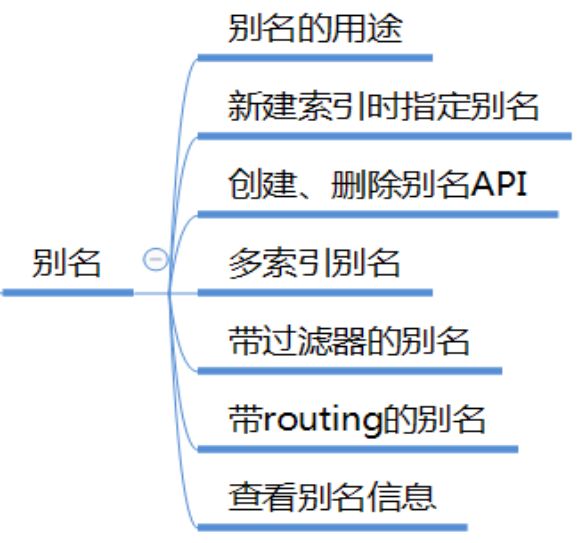

一、索引别名

1. 别名的用途

如果希望一次查询可查询多个索引。

如果希望通过索引的视图来操作索引,就像数据库库中的视图一样。

索引的别名机制,就是让我们可以以视图的方式来操作集群中的索引,这个视图可是多个索引,也可是一个索引或索引的一部分。

PUT /logs_20162801 { "mappings" : { "type" : { "properties" : { "year" : {"type" : "integer"} } } }, <!-- 定义了两个别名 --> "aliases" : { "current_day" : {}, "2016" : { "filter" : { "term" : {"year" : 2016 } } } } }

3. 创建别名 /_aliases

为索引test1创建别名alias1

POST /_aliases

{

"actions" : [

{ "add" : { "index" : "test1", "alias" : "alias1" } }

]

}

4. 删除别名

POST /_aliases

{

"actions" : [

{ "remove" : { "index" : "test1", "alias" : "alias1" } }

]

}

还可以这样写

DELETE /{index}/_alias/{name}

5. 批量操作别名

删除索引test1的别名alias1,同时为索引test2添加别名alias1

POST /_aliases

{

"actions" : [

{ "remove" : { "index" : "test1", "alias" : "alias1" } },

{ "add" : { "index" : "test2", "alias" : "alias1" } }

]

}

6. 为多个索引定义一样的别名

方式1:

POST /_aliases{

"actions" : [

{ "add" : { "index" : "test1", "alias" : "alias1" } },

{ "add" : { "index" : "test2", "alias" : "alias1" } }

]

}

方式2:

POST /_aliases

{

"actions" : [

{ "add" : { "indices" : ["test1", "test2"], "alias" : "alias1" } }

]

}

注意:只可通过多索引别名进行搜索,不可进行文档索引和根据id获取文档。

方式3:通过统配符*模式来指定要别名的索引

POST /_aliases

{

"actions" : [

{ "add" : { "index" : "test*", "alias" : "all_test_indices" } }

]

}

注意:在这种情况下,别名是一个点时间别名,它将对所有匹配的当前索引进行别名,当添加/删除与此模式匹配的新索引时,它不会自动更新。

7. 带过滤器的别名

索引中需要有字段

PUT /test1

{

"mappings": {

"type1": {

"properties": {

"user" : {

"type": "keyword"

}

}

}

}

}

过滤器通过Query DSL来定义,将作用于通过该别名来进行的所有Search, Count,

POST /_aliases

{

"actions" : [

{

"add" : {

"index" : "test1",

"alias" : "alias2",

"filter" : { "term" : { "user" : "kimchy" } }

}

}

]

}

8. 带routing的别名

可在别名定义中指定路由值,可和filter一起使用,用来限定操作的分片,避免不需要的其他分片操作。

POST /_aliases{

"actions" : [

{

"add" : {

"index" : "test",

"alias" : "alias1",

"routing" : "1"

}

}

]

}

为搜索、索引指定不同的路由值

POST /_aliases

{

"actions" : [

{

"add" : {

"index" : "test",

"alias" : "alias2",

"search_routing" : "1,2",

"index_routing" : "2"

}

}

]

}

9. 以PUT方式来定义一个别名

PUT /{index}/_alias/{name}

PUT /logs_201305/_alias/2013

带filter 和 routing

PUT /users

{

"mappings" : {

"user" : {

"properties" : {

"user_id" : {"type" : "integer"}

}

}

}

}

PUT /users/_alias/user_12

{

"routing" : "12",

"filter" : {

"term" : {

"user_id" : 12

}

}

}

10. 查看别名定义信息

GET /{index}/_alias/{alias}

GET /logs_20162801/_alias/*

GET /_alias/2016

GET /_alias/20*

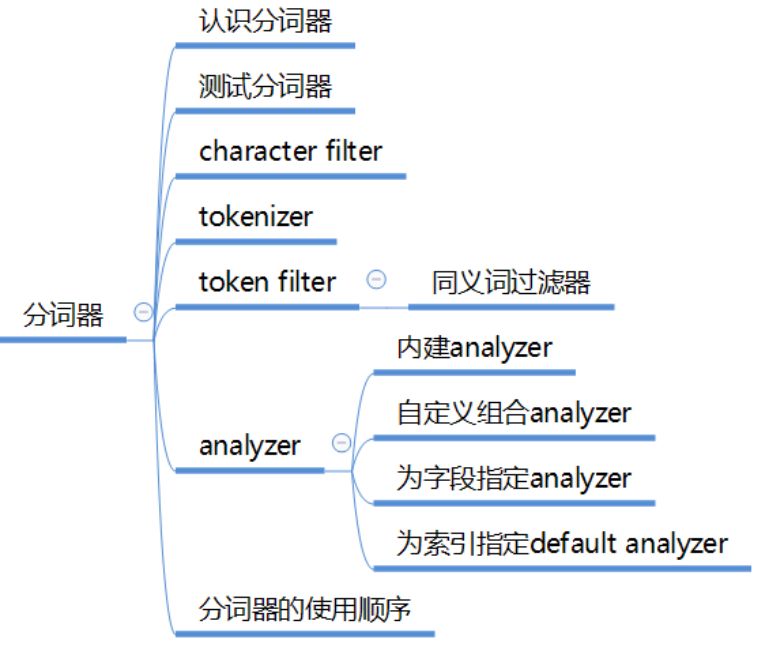

二、分词器

1. 认识分词器

1.1 Analyzer分析器

在ES中一个Analyzer 由下面三种组件组合而成:

character filter :字符过滤器,对文本进行字符过滤处理,如处理文本中的html标签字符。处理完后再交给tokenizer进行分词。一个analyzer中可包含0个或多个字符过滤器,多个按配置顺序依次进行处理。

tokenizer:分词器,对文本进行分词。一个analyzer必需且只可包含一个tokenizer。

token filter:词项过滤器,对tokenizer分出的词进行过滤处理。如转小写、停用词处理、同义词处理。一个analyzer可包含0个或多个词项过滤器,按配置顺序进行过滤。

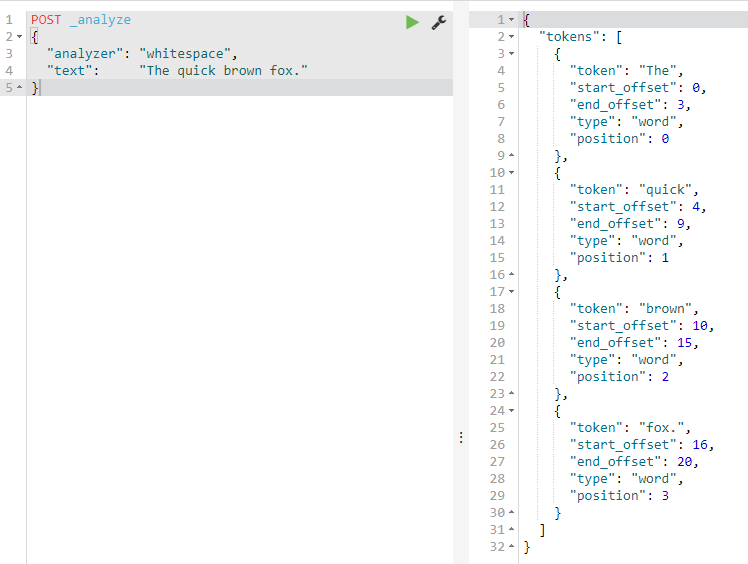

1.2 如何测试分词器

POST _analyze

{

"analyzer": "whitespace",

"text": "The quick brown fox."

}

POST _analyze

{

"tokenizer": "standard",

"filter": [ "lowercase", "asciifolding" ],

"text": "Is this déja vu?"

}

position:第几个词

offset:词的偏移位置

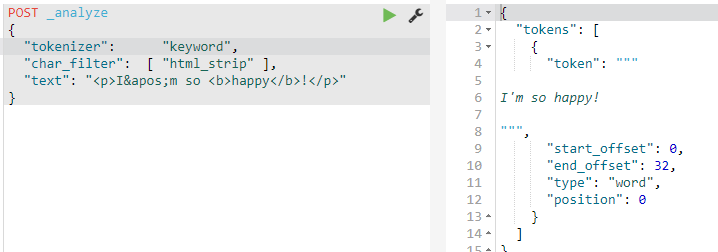

2. 内建的character filter

HTML Strip Character Filter

html_strip :过滤html标签,解码HTML entities like &.

Mapping Character Filter

mapping :用指定的字符串替换文本中的某字符串。

Pattern Replace Character Filter

pattern_replace :进行正则表达式替换。

2.1 HTML Strip Character Filter

POST _analyze

{

"tokenizer": "keyword",

"char_filter": [ "html_strip" ],

"text": "<p>I'm so <b>happy</b>!</p>"

}

在索引中配置:

PUT my_index

{

"settings": {

"analysis": {

"analyzer": {

"my_analyzer": {

"tokenizer": "keyword",

"char_filter": ["my_char_filter"]

}

},

"char_filter": {

"my_char_filter": {

"type": "html_strip",

"escaped_tags": ["b"]

}

}

}

}

}

escaped_tags 用来指定例外的标签。如果没有例外标签需配置,则不需要在此进行客户化定义,在上面的my_analyzer中直接使用 html_strip

测试:

POST my_index/_analyze

{

"analyzer": "my_analyzer",

"text": "<p>I'm so <b>happy</b>!</p>"

}

2.2 Mapping character filter

官网链接:https://www.elastic.co/guide/en/elasticsearch/reference/current/analysis-mapping-charfilter.html

PUT my_index

{

"settings": {

"analysis": {

"analyzer": {

"my_analyzer": {

"tokenizer": "keyword",

"char_filter": [

"my_char_filter"

]

}

},

"char_filter": {

"my_char_filter": {

"type": "mapping",

"mappings": [

"٠ => 0",

"١ => 1",

"٢ => 2",

"٣ => 3",

"٤ => 4",

"٥ => 5",

"٦ => 6",

"٧ => 7",

"٨ => 8",

"٩ => 9"

]

}

}

}

}

}

测试

POST my_index/_analyze

{

"analyzer": "my_analyzer",

"text": "My license plate is ٢٥٠١٥"

}

2.3 Pattern Replace Character Filter

PUT my_index

{

"settings": {

"analysis": {

"analyzer": {

"my_analyzer": {

"tokenizer": "standard",

"char_filter": [

"my_char_filter"

]

}

},

"char_filter": {

"my_char_filter": {

"type": "pattern_replace",

"pattern": "(\\d+)-(?=\\d)",

"replacement": "$1_"

}

}

}

}

}

测试

POST my_index/_analyze

{

"analyzer": "my_analyzer",

"text": "My credit card is 123-456-789"

}

3. 内建的Tokenizer

官网链接:https://www.elastic.co/guide/en/elasticsearch/reference/current/analysis-tokenizers.html

Standard Tokenizer

Letter Tokenizer

Lowercase Tokenizer

Whitespace Tokenizer

UAX URL Email Tokenizer

Classic Tokenizer

Thai Tokenizer

NGram Tokenizer

Edge NGram Tokenizer

Keyword Tokenizer

Pattern Tokenizer

Simple Pattern Tokenizer

Simple Pattern Split Tokenizer

Path Hierarchy Tokenizer

前面集成的中文分词器Ikanalyzer中提供的tokenizer:ik_smart 、 ik_max_word

测试tokenizer

POST _analyze

{

"tokenizer": "standard",

"text": "张三说的确实在理"

}

POST _analyze

{

"tokenizer": "ik_smart",

"text": "张三说的确实在理"

}

4. 内建的Token Filter

ES中内建了很多Token filter ,详细了解:https://www.elastic.co/guide/en/elasticsearch/reference/current/analysis-tokenizers.html

Lowercase Token Filter :lowercase 转小写

Stop Token Filter :stop 停用词过滤器

Synonym Token Filter:synonym 同义词过滤器

说明:中文分词器Ikanalyzer中自带有停用词过滤功能。

4.1 Synonym Token Filter 同义词过滤器

PUT /test_index

{

"settings": {

"index" : {

"analysis" : {

"analyzer" : {

"my_ik_synonym" : {

"tokenizer" : "ik_smart",

"filter" : ["synonym"]

}

},

"filter" : {

"synonym" : {

"type" : "synonym",

<!-- synonyms_path:指定同义词文件(相对config的位置)-->

"synonyms_path" : "analysis/synonym.txt"

}

}

}

}

}

}

同义词定义格式

ES同义词格式支持 solr、 WordNet 两种格式。

在analysis/synonym.txt中用solr格式定义如下同义词

张三,李四

电饭煲,电饭锅 => 电饭煲

电脑 => 计算机,computer

注意:

文件一定要UTF-8编码

一行一类同义词,=> 表示标准化为

测试:通过例子的结果了解同义词的处理行为

POST test_index/_analyze

{

"analyzer": "my_ik_synonym",

"text": "张三说的确实在理"

}

POST test_index/_analyze

{

"analyzer": "my_ik_synonym",

"text": "我想买个电饭锅和一个电脑"

}

5. 内建的Analyzer

官网链接:https://www.elastic.co/guide/en/elasticsearch/reference/current/analysis-analyzers.html

Standard Analyzer

Simple Analyzer

Whitespace Analyzer

Stop Analyzer

Keyword Analyzer

Pattern Analyzer

Language Analyzers

Fingerprint Analyzer

集成的中文分词器Ikanalyzer中提供的Analyzer:ik_smart 、 ik_max_word

内建的和集成的analyzer可以直接使用。如果它们不能满足我们的需要,则我们可自己组合字符过滤器、分词器、词项过滤器来定义自定义的analyzer

5.1 自定义 Analyzer

配置参数:

PUT my_index8

{

"settings": {

"analysis": {

"analyzer": {

"my_ik_analyzer": {

"type": "custom",

"tokenizer": "ik_smart",

"char_filter": [

"html_strip"

],

"filter": [

"synonym"

]

}

},

"filter": {

"synonym": {

"type": "synonym",

"synonyms_path": "analysis/synonym.txt"

}

} } }}

5.2 为字段指定分词器

PUT my_index8/_mapping/_doc

{

"properties": {

"title": {

"type": "text",

"analyzer": "my_ik_analyzer"

}

}

}

如果该字段的查询需要使用不同的analyzer

PUT my_index8/_mapping/_doc

{

"properties": {

"title": {

"type": "text",

"analyzer": "my_ik_analyzer",

"search_analyzer": "other_analyzer"

}

}

}

测试结果

PUT my_index8/_doc/1

{

"title": "张三说的确实在理"

}

GET /my_index8/_search

{

"query": {

"term": {

"title": "张三"

}

}

}

5.3 为索引定义个default分词器

PUT /my_index10

{

"settings": {

"analysis": {

"analyzer": {

"default": {

"tokenizer": "ik_smart",

"filter": [

"synonym"

]

}

},

"filter": {

"synonym": {

"type": "synonym",

"synonyms_path": "analysis/synonym.txt"

}

}

}

},

"mappings": {

"_doc": {

"properties": {

"title": {

"type": "text"

}

}

}

}

}

测试结果:

PUT my_index10/_doc/1

{

"title": "张三说的确实在理"

}

GET /my_index10/_search

{

"query": {

"term": {

"title": "张三"

}

}

}

6. Analyzer的使用顺序

我们可以为每个查询、每个字段、每个索引指定分词器。

在索引阶段ES将按如下顺序来选用分词:

首先选用字段mapping定义中指定的analyzer

字段定义中没有指定analyzer,则选用 index settings中定义的名字为default 的analyzer。

如index setting中没有定义default分词器,则使用 standard analyzer.

查询阶段ES将按如下顺序来选用分词:

The analyzer defined in a full-text query.

The search_analyzer defined in the field mapping.

The analyzer defined in the field mapping.

An analyzer named default_search in the index settings.

An analyzer named default in the index settings.

The standard analyzer.